DeepSeek vs ChatGPT is no longer a “which chatbot is better?” question.

For executives, it’s a strategic choice between:

- An open, engineerable model stack you can deploy and tune.

- A highly productized AI workspace with tools, governance, and a flagship model that adapts its reasoning depth to the task.

ChatGPT now centers on GPT‑5.2 and offers Auto routing between Instant and Thinking, with a slim “chain of thought” view and an “Answer now” control for speed.

DeepSeek’s open-source DeepSeek‑R1 is a first-generation reasoning model trained with large-scale reinforcement learning, built on a Mixture-of-Experts base (671B total parameters, 37B activated) and released under an MIT license.

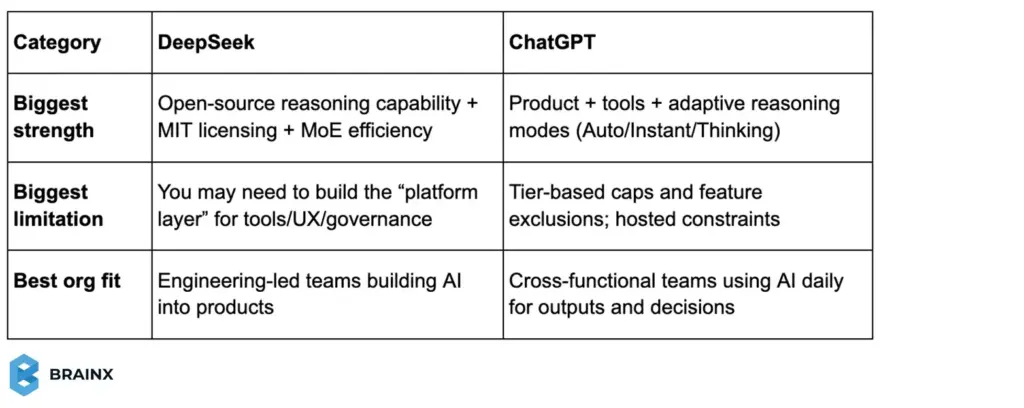

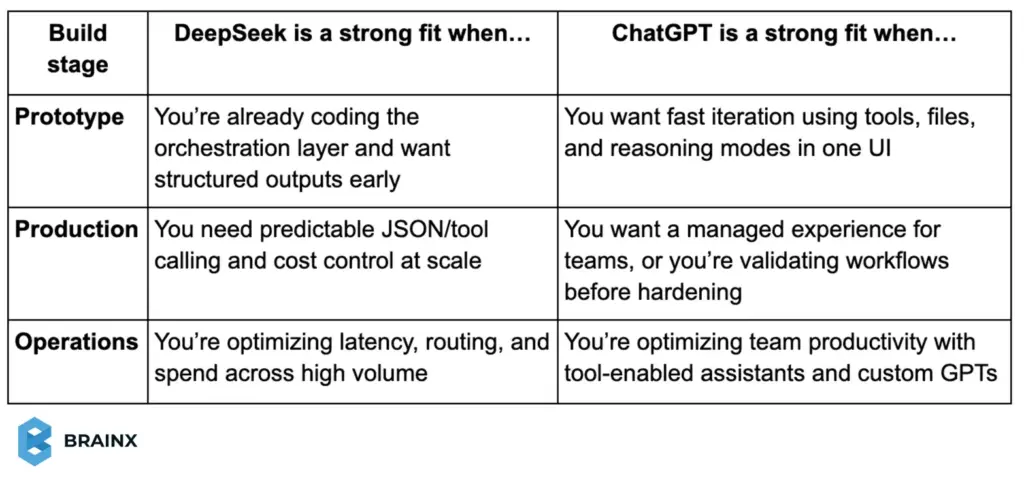

For teams:

- Choose DeepSeek when you need deployment control and customization.

- Choose ChatGPT when you need a polished UX and integrated tools (search, data analysis, files, images, Canvas, memory) that accelerate day-to-day work without building your own platform.

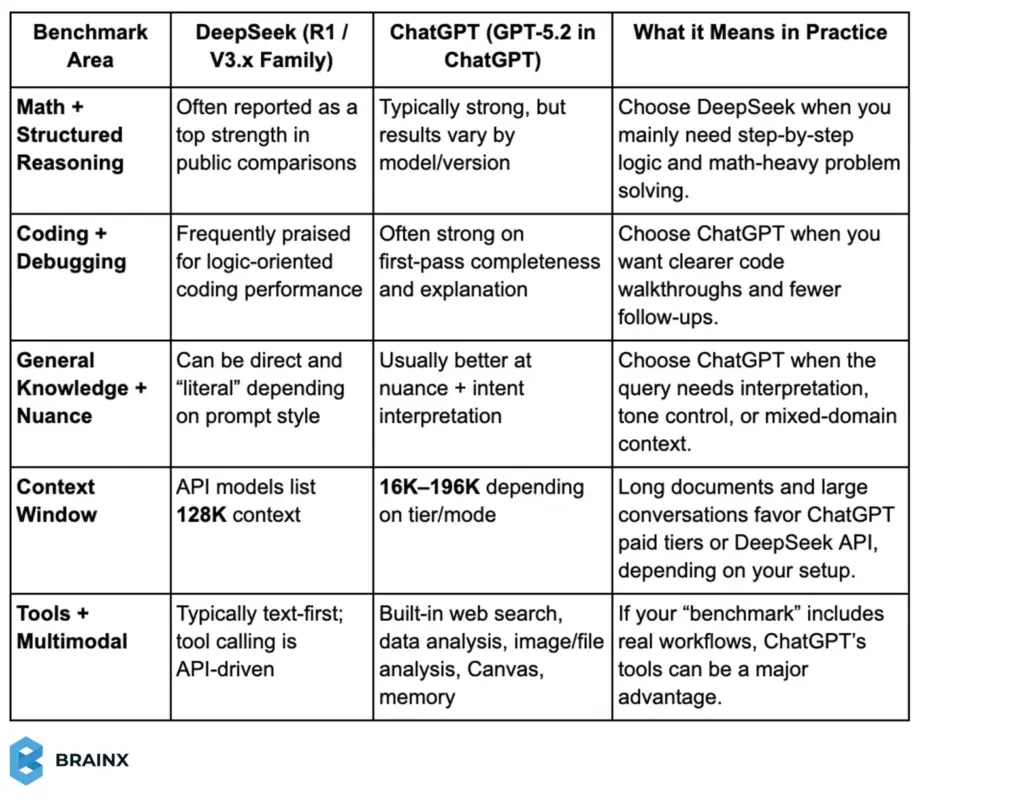

Performance Benchmark Testing

Benchmarks are useful for spotting patterns, not declaring a permanent winner. Most public head-to-head numbers you’ll see online were run on earlier ChatGPT generations, while ChatGPT now defaults to GPT-5.2 in the product, so absolute scores can shift over time. Still, recent third-party summaries consistently show DeepSeek performing especially well on structured math/logic, while ChatGPT tends to be stronger when tasks blend reasoning with broad context and tool-assisted workflows.

DeepSeek vs ChatGPT Benchmarks

Note: If you want the fairest internal test, run the same prompts on your real tasks (coding tickets, support macros, docs, and SOPs) and score outcomes like accuracy, time saved, and revision cycles.

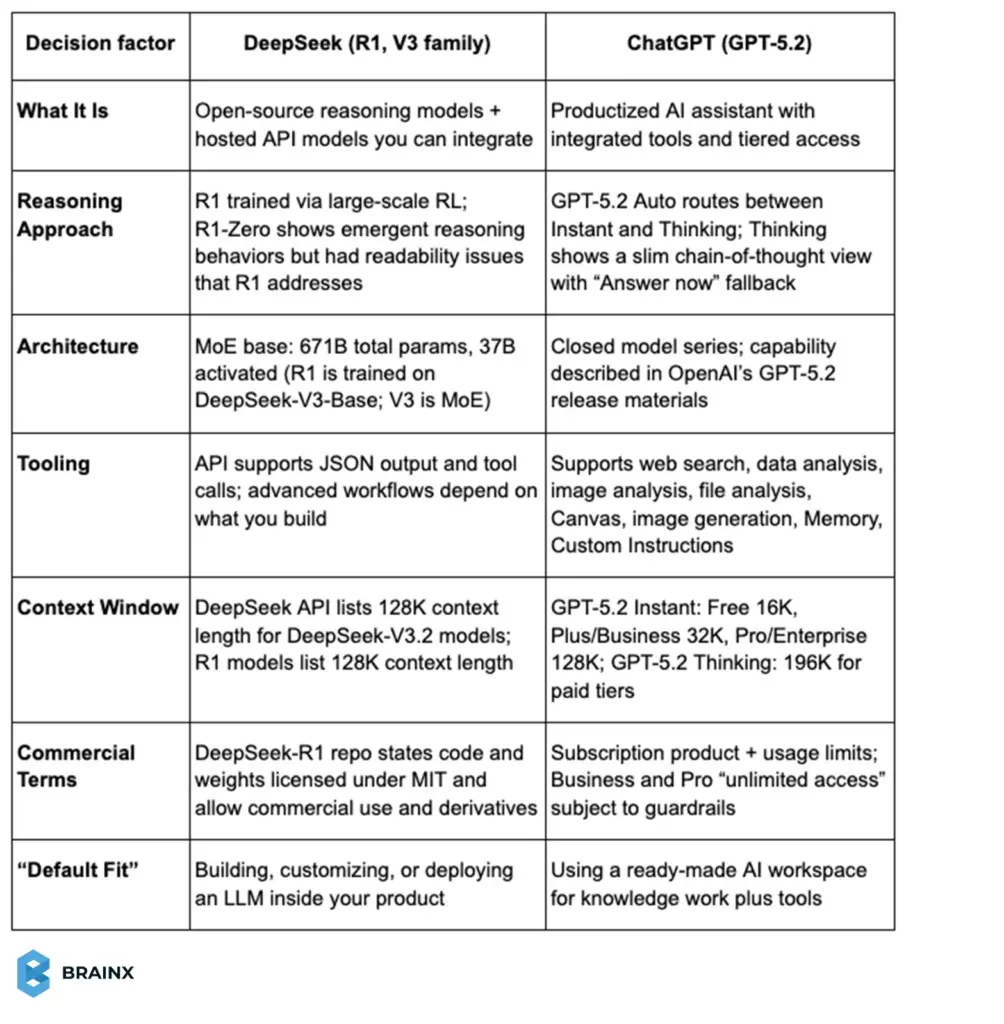

DeepSeek vs ChatGPT Comparison: Key Differences at a Glance

DeepSeek and ChatGPT represent two different “ends” of the modern AI adoption spectrum. DeepSeek is best understood as an open model ecosystem—anchored by models like DeepSeek‑R1 and DeepSeek‑V3—optimized for reasoning and cost efficiency, and designed to be integrated, self-hosted, and adapted.

ChatGPT, by contrast, is a full AI product environment that now defaults to GPT‑5.2 and includes built-in tools (web search, data analysis, image and file analysis, Canvas, image generation, memory, and custom instructions), plus a model picker for paid tiers.

This DeepSeek vs ChatGPT comparison matters today because “model quality” alone no longer determines business value. Delivery mode (API vs product UI), reasoning controls, tool access, context length, message caps, privacy requirements, and integration effort now drive total cost and total impact.

In this report-style blog post, we’ll break down:

- What each platform does best

- Where each has real limitations

- How to choose based on practical SaaS and enterprise workflows

The fastest way to evaluate these tools is to separate model architecture and availability from end-user workflow experience. DeepSeek is strongest when you want an open stack you can control; ChatGPT is strongest when you want an “AI operating system” with advanced tools and predictable UX.

Summary comparison table

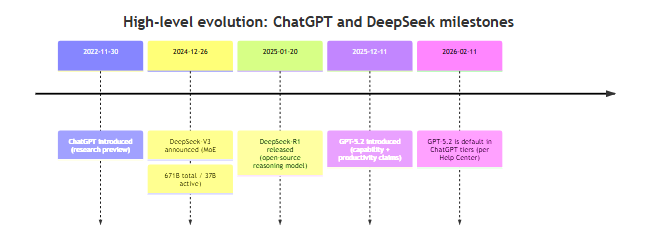

Mermaid Timeline of Model Evolution

The timeline below highlights milestone releases that shape today’s capabilities and buying decisions.

What Is DeepSeek AI?

DeepSeek AI is an open model ecosystem built around high-efficiency Mixture-of-Experts language models and reasoning-focused post-training. DeepSeek‑V3 is explicitly described as a Mixture-of-Experts (MoE) model with 671B total parameters and 37B activated per token, trained on 14.8T tokens, and released with open-source artifacts and a technical report.

Core purpose and positioning: DeepSeek’s positioning is “performance-per-dollar plus openness.” Its DeepSeek‑R1 line focuses on reasoning capability: DeepSeek‑R1‑Zero is trained via large-scale reinforcement learning without supervised fine-tuning as a preliminary step, and DeepSeek‑R1 adds “cold-start data” before RL to improve readability and usability.

DeepSeek‑R1 and R1‑Zero are open-sourced, and the repository states that both code and model weights are MIT licensed and support commercial use and derivative works (including distillation).

A practical note for business readers: DeepSeek is a Chinese company based in Hangzhou, China, and it captured attention worldwide with low-cost models and open-source releases that caused pricing responses throughout the market.

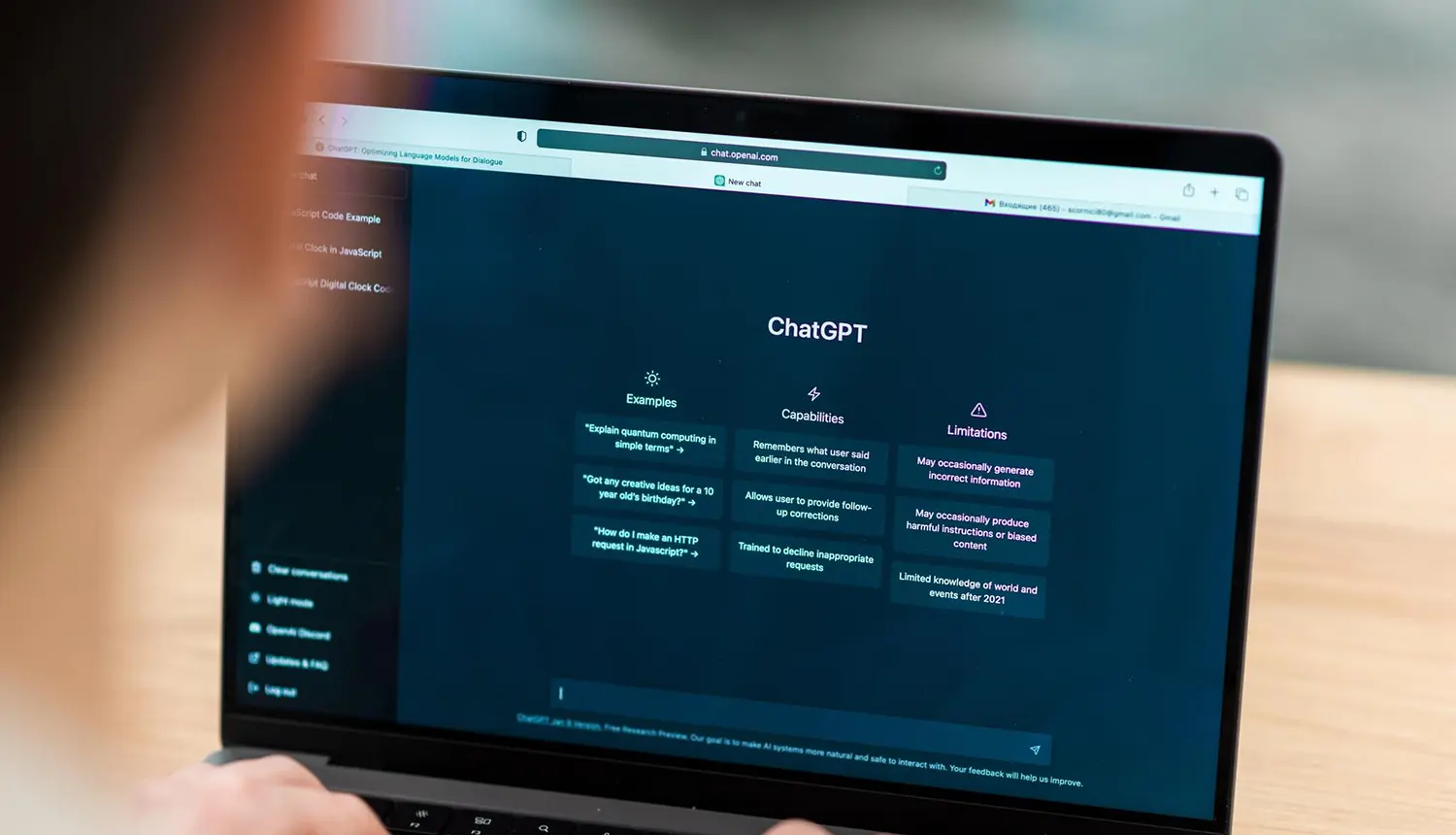

What Is ChatGPT?

ChatGPT is a product platform from OpenAI that packages frontier models into a consumer- and enterprise-friendly workspace. ChatGPT was introduced in November 2022 as a conversational interface for a model fine-tuned from the GPT‑3.5 series using reinforcement learning from human feedback.

Core purpose and positioning: ChatGPT’s core purpose is to turn frontier model quality into repeatable productivity. As of February 2026, ChatGPT’s Help Center states that GPT‑5.2 is available to all ChatGPT tiers and is the default model family, with paid users able to manually select Instant or Thinking via the model picker.

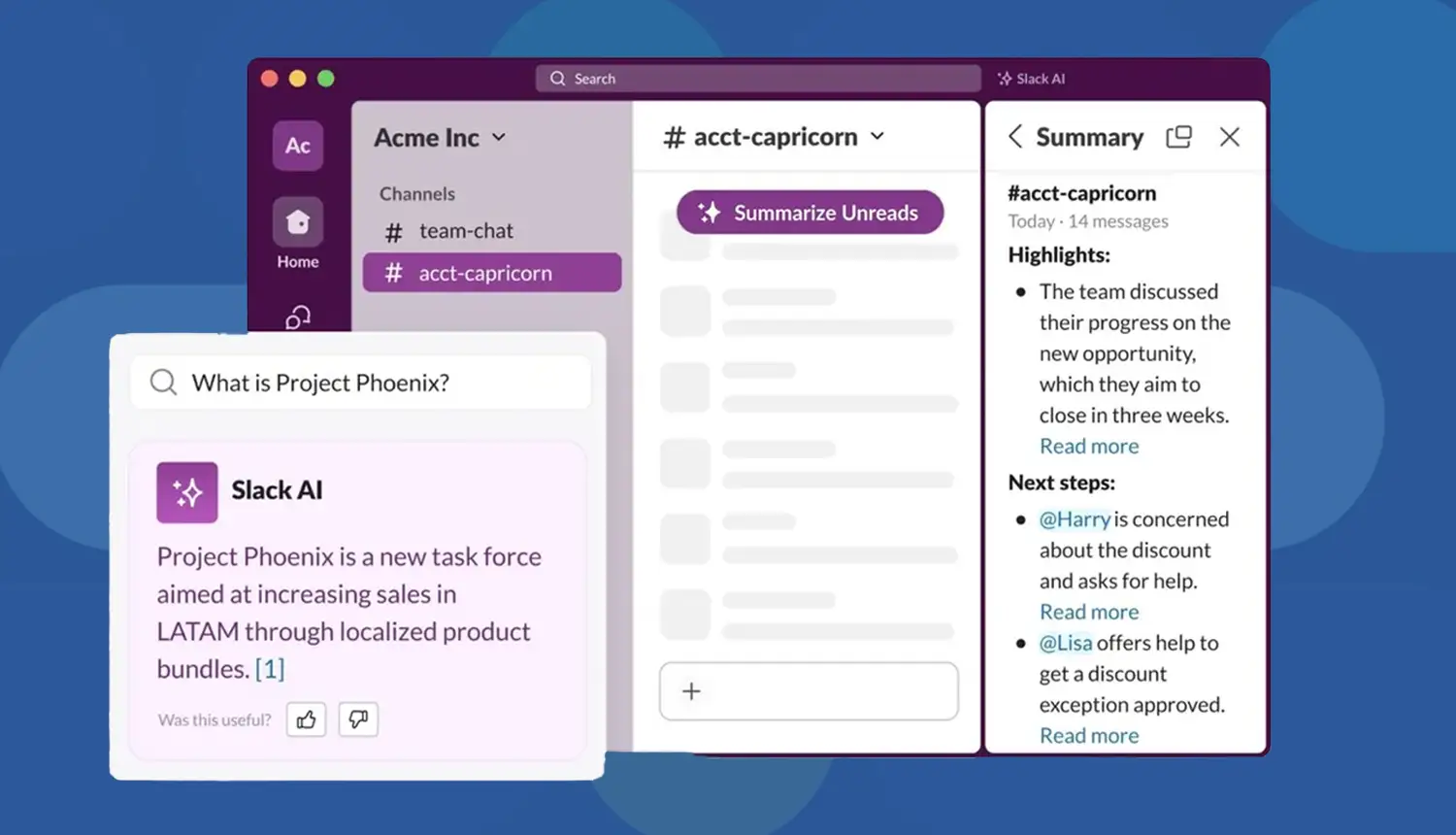

ChatGPT’s product differentiator is its integrated tooling layer—web search, data analysis, image and file analysis, Canvas, image generation, Memory, and Custom Instructions—so users can execute workflows inside one interface rather than stitching together separate tools.

Feature Comparison: DeepSeek vs ChatGPT

This section focuses on “capability on the page”—what each system is built to do well—rather than pricing or UI, which we’ll cover later.

Reasoning and Accuracy

This DeepSeek AI vs ChatGPT comparison starts with a key question: How does each system behave when the task requires multi-step reasoning and the answer must be correct—not just plausible?

DeepSeek’s Reasoning Design (R1)

DeepSeek positions R1 as a first-generation reasoning model trained with a pipeline centered on reinforcement learning and chain-of-thought exploration. The R1 repository describes two related models:

- DeepSeek‑R1‑Zero: trained via large-scale RL without SFT first, showing emergent reasoning behaviors (e.g., long chains of thought, self-verification) but also issues like repetition and language mixing.

- DeepSeek‑R1: adds “cold-start data” before RL to improve usability while retaining reasoning performance.

From an accuracy perspective, this matters because RL-perf reasoning models tend to be better at “structured correctness” (math steps, logic chains, consistent constraints). DeepSeek’s own materials claim DeepSeek‑R1 achieves performance comparable to OpenAI’s o1 on math, code, and reasoning tasks.

ChatGPT’s Reasoning Design (GPT‑5.2)

GPT‑5.2 introduces an explicit reasoning control surface inside ChatGPT. With GPT‑5.2 Auto, ChatGPT can decide when to use Instant or Thinking based on prompt signals and learned patterns from user choices and correctness.

When GPT‑5.2 is in reasoning mode, ChatGPT shows a slimmed chain-of-thought view, and you can click Answer now to switch back to Instant for speed.

What this means in practice:

- If your organization values transparent “reasoning effort” control, ChatGPT’s Instant/Thinking split is a genuine product advantage because it lets teams standardize when deeper reasoning should be used (e.g., use Thinking for “ship a patch” coding tasks; use Instant for drafting internal updates).

- In case your organization puts value in model-level openness (you should run your own inference, distill for performance or integrate with proprietary tools behind a firewall), the open-source release and the MIT-licensed weights of DeepSeek are hard to beat.

The following approach can be taken where accuracy matters the most:

- Prefer DeepSeek when the task is “bounded” (clear constraints, code, math) and you plan to wrap it with deterministic checks anyway.

- Prefer ChatGPT when the task needs tool-backed verification (search, file analysis, or data analysis) to reduce hallucination risk through grounded context.

Coding and Technical Tasks

For SaaS and product teams, coding performance is less about writing a single snippet and more about supporting a workflow: reading requirements, manipulating files, reasoning across codebases, and producing patches or refactors.

DeepSeek for Coding

DeepSeek‑V3’s training and architecture were built for efficient inference and strong performance, and DeepSeek’s own V3 materials emphasize MoE efficiency (only 37B parameters are active per token) and large-scale training (14.8T tokens) followed by SFT and RL.

DeepSeek‑R1 adds a reasoning layer on top, and the R1 repo highlights performance claims across math, code, and reasoning, plus distilled models intended to bring reasoning patterns into smaller dense checkpoints.

Why does that matter for engineering teams? An open reasoning model is especially attractive for:

- Internal developer tools (code review helpers, test generators, incident summarizers), when the data is sensitive and should not be leaving your environment.

- Use cases that are cost sensitive, with large volume usage, and consume pricing and inference efficiency are major factors.

ChatGPT for Coding

OpenAI’s GPT‑5.2 release materials describe GPT‑5.2 Thinking as improving real-world software engineering performance (including on SWE-Bench Pro and SWE-bench Verified) and translating those gains into more reliable debugging, refactors, and end-to-end implementation.

In ChatGPT specifically, GPT‑5.2 supports tool use—especially data analysis and file analysis—which changes the coding experience from “chat for code” to “work with artifacts.”

An original, SaaS-friendly way to compare them:

- When your developers require an assistant during coding (read logs, recreate bugs, run analysis, reason step-by-step, and maintain context across long threads), ChatGPT often wins on speed-to-outcome as it already includes relevant tools and long context windows.

- If your product requires an LLM as infrastructure (built-in inference, adjustable prompts and routing, cost management, deployment options), DeepSeek is very attractive since its openness allows you to use the model like a component, and not like a product subscription.

Content and Creativity

Most leadership teams care about content generation for one of three reasons: marketing velocity, internal communication, or customer-facing content (support, onboarding, docs). “Creativity” in business context usually means: tone control, audience adaptation, and consistency.

DeepSeek for Content

DeepSeek’s public narrative emphasizes reasoning and technical strength. Still, recent reporting describes improvements in R1 updates for writing and summarizing tasks, including reduced hallucinations and stronger output quality in rewriting and summarization scenarios.

For teams that implement DeepSeek via API, content quality can be “productized” through structured prompts, templates, style guides embedded into system prompts, and post-processing checks.

ChatGPT for Content

OpenAI describes GPT‑5.2 Instant as a fast workhorse with improvements in information-seeking queries, “how‑tos,” technical writing, and translation, and GPT‑5.2 Thinking as designed for deeper work like summarizing long documents and answering questions about uploaded files with clearer structure.

In other words: ChatGPT’s advantage is not only raw writing quality, but the ability to bring files, images, and research tools into the same content workflow.

The practical difference, when it comes to SaaS marketing teams, is operational:

- DeepSeek is superior when you want to incorporate generation to your CMS or pipeline Example: generating 10,000 help center drafts at a low cost, and then reviewing.

- ChatGPT is better when you want to collaborate in a UI with tools and memory. Example: daily campaign iteration, briefs, and rapid rewrites.

Customization and Integration

For SaaS teams, customization is not just tone. It’s the ability to plug the model into real workflows like product support, internal tools, RAG, and agent pipelines. Integration quality shows up in how easily you can route requests, enforce schemas, and connect tools safely.

DeepSeek for Customization and Integration

DeepSeek is attractive when you want the model to behave like infrastructure. DeepSeek-R1 is open-weight, which supports self-hosting and deeper customization for internal use cases.

On the integration side, the DeepSeek API is designed to be OpenAI-compatible, and its current deepseek-chat and deepseek-reasoner models support JSON output and tool calls. It’s a combination that makes it easier to embed DeepSeek inside products where structured outputs and tool routing matter.

ChatGPT for Customization and Integration

ChatGPT’s customization is product-led. GPT-5.2 supports Custom Instructions and the full toolset in ChatGPT, which means you can integrate “work” features like file analysis and data analysis directly into the workflow without building a wrapper layer first.

ChatGPT also supports custom GPTs that combine instructions, extra knowledge, and optional capabilities like browsing or data analysis. This is a practical way to standardize behavior across teams.

Simply put: If you need an LLM as a component inside your product with control over deployment and structured outputs, DeepSeek is a strong fit.

If you need fast adoption across roles with built-in tools and configurable assistants, ChatGPT often wins because the integration is already packaged into the product experience.

Performance and User Experience

Performance is where many evaluations get misleading because “model speed” and “user experience speed” are not the same thing. You should evaluate the full interaction loop:

prompt → tool use → revisions → final artifact.

Speed and Response Quality

- ChatGPT offers GPT‑5.2 Auto, which can switch to GPT‑5.2 Thinking on complex tasks and show a slim chain-of-thought view, with an “Answer now” option to prioritize speed.

- DeepSeek’s speed depends on deployment, but DeepSeek’s own V3 announcement claims high throughput (e.g., “60 tokens/second,” described as faster than previous versions) and focuses on inference efficiency from MoE design.

Interface and Accessibility

ChatGPT’s differentiator is that it’s a cohesive product: model picker (paid), tool selection, file workflows, and integrated features like Memory and Canvas (except Pro limitations noted below).

DeepSeek’s interface experience is more variable: you may use the official web/app experience or build via API. DeepSeek’s R1 release announcement highlights that the website and API were live (“Try DeepThink”), indicating a direct-to-user entry point, but the enterprise-grade UX typically depends on the wrapper you build.

Stability and Consistency

Two stability factors matter: (1) service consistency and (2) plan-based restrictions.

- ChatGPT is explicit about what happens beyond caps: Free and Plus/Go users are switched to a “mini” model after GPT‑5.2 message limits reset.

- DeepSeek stability and availability are often framed through pricing and access (e.g., off-peak pricing strategies and rapid model updates), and Reuters reports DeepSeek’s rapid growth and global attention around R1’s release.

Pricing, Subscriptions and Accessibility

Pricing is not just what you pay—it’s what you can actually use before you hit caps, downgrades, or engineering overhead. This section treats limits as part of the cost model.

ChatGPT pricing and GPT‑5.2 message caps

OpenAI’s Help Center provides very specific GPT‑5.2 limits and behavior by tier:

- Free: up to 10 messages with GPT‑5.2 every 5 hours, after which chats automatically use the mini version until the limit resets.

- Plus/Go: up to 160 messages with GPT‑5.2 every 3 hours, then the same switch to mini.

- Thinking usage (manual selection): if you’re on Plus or Business, you can manually select GPT‑5.2 Thinking with a limit of up to 3,000 messages per week; automatic switching to Thinking does not count toward this weekly limit.

- Go plan Thinking: Go users can enable Thinking via the tools menu and send up to 10 messages every 5 hours after enabling Thinking.

- Business and Pro: described as “unlimited access” to GPT‑5.2 models, subject to abuse guardrails and terms.

Two critical nuance points for decision-makers:

- Paid tiers can manually choose Instant vs Thinking, which matters if you’re standardizing workflow quality.

- GPT‑5.2 Pro has feature exclusions. OpenAI notes that Apps, Memory, Canvas, and image generation are not available with Pro.

DeepSeek API Pricing and Access Limits

DeepSeek publishes token pricing directly in its API docs for its primary hosted models (as of the documentation displayed in February 2026):

- Model versions: deepseek-chat and deepseek-reasoner are listed as DeepSeek‑V3.2 (with non-thinking and thinking modes).

- Context length: 128K.

- Max output: deepseek-chat default 4K (max 8K); deepseek-reasoner default 32K (max 64K).

- Features: both list JSON output and tool calls support.

- Pricing (per 1M tokens): cache-hit input $0.028, cache-miss input $0.28, output $0.42 (as shown on its Models & Pricing page). DeepSeek also explicitly notes prices can change and recommends checking the page for the most recent pricing.

Separately, DeepSeek’s R1 release announcement (January 2025) describes R1 as fully open-source under MIT and provides a snapshot of API access (including “model=deepseek-reasoner”) and then-current pricing.

Which AI is More Cost-Effective? The Practical Pricing Takeaway

- ChatGPT costs are predictable per seat, but your usable capacity depends on caps and tool availability at your tier.

- DeepSeek costs are predictable per token, but your total cost includes engineering time if you need ChatGPT-like UX and orchestration.

As a founder-friendly heuristic:

- If the work is “interactive knowledge work” (writing, planning, analysis with tools), ChatGPT often has a lower time-to-value.

- If the work is “embedded inference in a product,” DeepSeek often has a lower cost-to-serve at scale.

Strengths and Limitations

The goal here is decision support: identify what you gain and what you risk with each option, with an emphasis on SaaS and enterprise reality.

DeepSeek Strengths and Limitations

Strengths

DeepSeek’s biggest strength is openness plus efficiency. DeepSeek‑R1 is open-sourced, cites a reasoning-centric RL pipeline, and is released under an MIT license that permits commercial use and derivatives (including distillation).

It is also grounded in a proven MoE base: DeepSeek‑V3 is described as 671B total parameters with 37B activated per token, trained on 14.8T tokens, and followed by SFT and RL to “fully harness its capabilities.”

For engineering-first teams, these properties translate into: lower marginal inference cost, the ability to self-host, and the ability to build domain-optimized variants.

Limitations

DeepSeek’s limitations tend to be “product” limitations rather than “model math” limitations. If you want the full ChatGPT-style experience—tool selection, file UX, memory, model routing, governance—you typically have to engineer the wrapper.

There are also adoption considerations: Reuters reporting notes DeepSeek stores user information on servers in China (in the context of U.S. adoption concerns), which is relevant if your team is using hosted DeepSeek services rather than self-hosting open weights.

ChatGPT Strengths and Limitations

Strengths

ChatGPT’s strength is that it packages model capability into a complete workflow environment. GPT‑5.2 Auto can switch between Instant and Thinking based on complexity, shows a slim chain-of-thought view in reasoning mode, and provides an “Answer now” button for speed.

GPT‑5.2 supports ChatGPT tools (search, data analysis, image/file analysis, Canvas, image generation, memory, custom instructions), which is often the difference between “useful chat” and “repeatable business workflow.”

OpenAI’s GPT‑5.2 release materials also position the model as improved for professional work, with stated benchmark gains in software engineering and knowledge work tasks.

Limitations

The most important limitation is operational: caps and tier differences. Free and Plus/Go users hit message limits and then automatically switch to mini models.

Another nuance: GPT‑5.2 Pro is described as research-grade intelligence, but OpenAI notes Pro excludes Apps, Memory, Canvas, and image generation—features many product teams assume come “with ChatGPT.”

Finally, since ChatGPT is a hosted product, it might not be suited for use cases where full on-prem control and self-managed compliance boundaries are required. It’s an area where open-source DeepSeek deployments have a structural advantage.

Strengths/Limitations Side-by-Side Table

Best AI Model for Different Users

Choosing between DeepSeek and ChatGPT is easiest when you stop asking “which is better” and start asking “better for whom.” Different users care about different outcomes. Some need natural conversations. Others need workflow automation, governance, and reliability. Technical teams often need controllable infrastructure and strong coding support.

Everyday Users: Conversations

For everyday users, the best model is the one that feels effortless. That usually means understanding intent, keeping the tone natural, and staying helpful across a wide range of topics without constant re-prompting.

DeepSeek for Everyday Conversations

DeepSeek can handle Q&A and general chatting well, especially when prompts are clear and direct. It often feels structured and “to the point,” which some users prefer for quick answers and learning.

ChatGPT for Everyday Conversations

ChatGPT tends to shine in conversational flow. It adapts tone better, supports longer back-and-forth, and feels more “assistive” for planning, explaining, rewriting, and brainstorming. If you also care about voice, images, files, and a smoother interface, ChatGPT’s product experience usually makes it the easier daily driver.

Businesses: Productivity and Automation

For businesses, the question is less about “chat quality” and more about operational value. Can the model reduce ticket time, generate consistent outputs, follow brand and compliance rules, and plug into processes without creating chaos?

DeepSeek for Business Productivity

DeepSeek becomes compelling when you treat it as a component inside your stack. If you need deployment control, cost management at scale, or custom behavior for internal tools, DeepSeek can be a strong base. It’s especially relevant when sensitive data should stay within your environment and you want flexibility in how the system is designed.

ChatGPT for Business Productivity

ChatGPT is often chosen for speed-to-adoption. It’s easier to roll out across teams, and its built-in tools can turn “AI chat” into “AI work.” That matters for teams that want quick wins like drafting support macros, summarizing meetings, reviewing docs, and automating repetitive knowledge tasks without building a full AI layer first.

Developers and AI/ML Teams: Coding and Technical Work

For engineering teams, the best model is the one that supports the entire workflow. That includes reading requirements, reasoning through edge cases, producing code patches, handling logs, and keeping context across long technical threads.

DeepSeek for Developers and AI/ML teams

DeepSeek is attractive when you need model-level control. It fits well for internal developer tools, custom agents, and productized features where the model must behave predictably under your orchestration. It’s also a practical option when you want infrastructure flexibility, including deployment choices and tighter control over cost and routing.

ChatGPT for Developers and AI/ML teams

ChatGPT is often the fastest path to strong developer productivity because the tooling is already integrated. For debugging, refactors, documentation, and analysis-heavy work, it tends to deliver better speed-to-outcome when you’re iterating with files and artifacts rather than just prompting in a blank chat.

Which suits you? If you’re implementing AI inside a product and want full control over behavior and deployment, DeepSeek usually aligns better. If you’re equipping a team with a powerful assistant today for coding, docs, and cross-functional collaboration, ChatGPT often wins on usability and time saved.

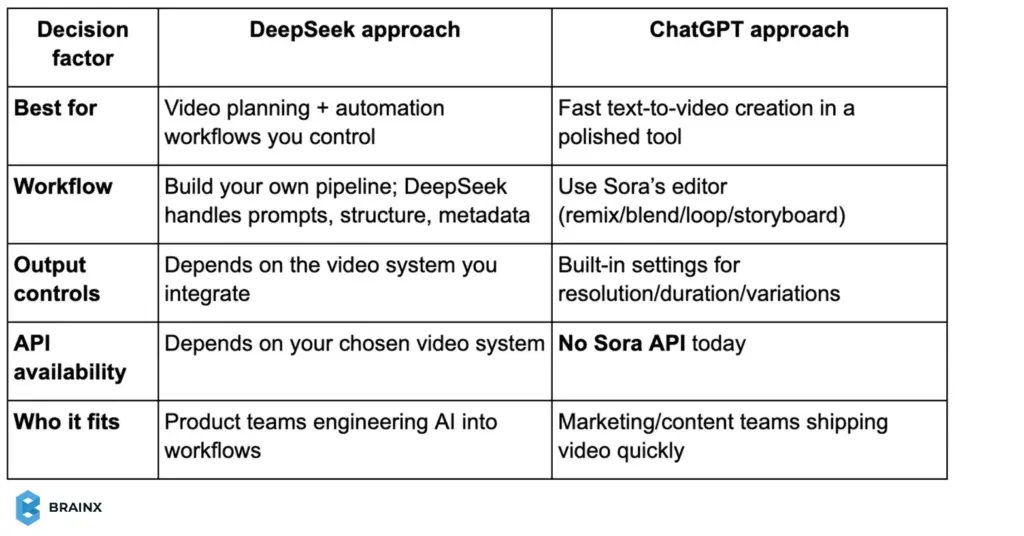

DeepSeek AI vs ChatGPT: Text-to-Video Generation

Text-to-video is quickly becoming a practical advantage for SaaS teams, not a gimmick. It helps with product teasers, onboarding clips, ad variations, and “explainer” content without a full production cycle. In this DeepSeek AI vs ChatGPT comparison, video is one of the clearest places where product experience and tooling matter more than raw text quality.

Text-to-Video in ChatGPT

ChatGPT’s edge here comes from Sora, OpenAI’s video generation product. Sora supports text prompts and can also start from uploaded images or video, then iterate using editor actions like remixing, blending, looping, and storyboard-style sequencing.

From a workflow perspective, it’s built for creators and teams who want outputs fast:

- Up to 20 seconds per generation and adjustable settings like aspect ratio, resolution, duration, and variations.

- Plan-based capabilities: Plus/Business includes up to 480p + 10s, while Pro goes up to 1080p + 20s with faster generations and more concurrency.

- Important for builders: Sora has no API access right now, so you can’t directly embed it into your product pipeline via API.

Text-to-Video in DeepSeek

DeepSeek’s official public model releases are strongest in text reasoning and, on the multimodal side, vision + image generation (for example, Janus supports multimodal understanding and text-to-image generation).

But DeepSeek does not currently present a first-party text-to-video product in its core public model repositories, so “DeepSeek for video” usually means a different approach: use DeepSeek for the planning layer (scripts, shot lists, prompts, captions, storyboards), then pair it with a dedicated video generation system that fits your stack.

DeepSeek and ChatGPT: Which is Better in Text to Video?

If you need video generation as a ready-to-use feature, ChatGPT + Sora is the practical winner today. If you need video as part of an integrated product workflow, DeepSeek can still play a strong role, but typically as the orchestration brain around whatever video generation stack you deploy.

DeepSeek vs. ChatGPT for LLM Fine-Tuning

Fine-tuning is where the “AI chatbot” conversation turns into an AI product conversation. For SaaS teams, the goal is rarely “better writing.” It’s tighter domain behavior, fewer hallucinations in your niche, consistent formats, and responses that match your policies, data model, and tone.

Fine-Tuning and Customization Capabilities

DeepSeek for Fine-tuning

DeepSeek becomes especially interesting because parts of the ecosystem are open-weight, which gives teams the option to fine-tune and deploy on their own infrastructure. DeepSeek-R1 is publicly released via the official repository, making it a realistic path for organizations that want full control over model behavior.

In practice, most teams fine-tune using efficient methods like LoRA (a lightweight technique that adapts a model without retraining everything). Several hosted platforms also support LoRA fine-tuning for DeepSeek V3.x checkpoints, which reduces the ops burden if you don’t want to self-host.

ChatGPT for Fine-tuning

ChatGPT (running GPT-5.2 in the product) is not positioned as “fine-tune the ChatGPT model.” Instead, customization is primarily product-layer configuration: model selection (Instant/Thinking/Auto), tool use (file analysis, data analysis, etc.), and behavior shaping through instructions and workflows.

If you specifically need model fine-tuning in an application, OpenAI offers fine-tuning for select API models (for example, GPT-4o fine-tuning), which is typically used to lock in tone, structure, and domain-following behavior in production.

When comparing them, think of it as control vs convenience:

- If you need an LLM you can treat like infrastructure (train it, host it, govern it), DeepSeek is appealing.

- If you need fast rollout and strong day-to-day usability, ChatGPT wins by packaging intelligence + tools into a product teams actually adopt.

If you’re building a product feature around the model, DeepSeek fine-tuning can be a strategic advantage. If you’re optimizing team output quickly, ChatGPT’s customization is usually “enough,” and faster to operationalize.

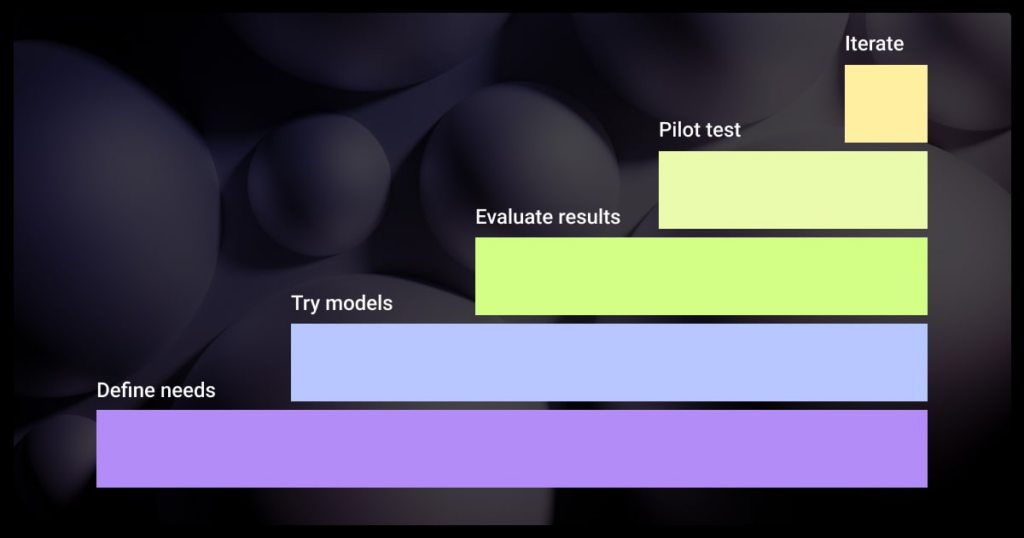

Best Use Cases: When to Use DeepSeek vs ChatGPT

Rather than declaring a winner, align the tool to the workflow.

Developers and Technical Teams

Use DeepSeek when you are building:

- Internal tooling that must run behind a firewall (CI assistants, incident bots, regulated data workflows). The MIT license and open weights make this possible.

- LLM features inside your product that need predictable per-token pricing and engineering control (routing, caching, guardrails, evaluation).

Use ChatGPT when your dev team needs:

- A fast “workbench” that can use tools like data analysis and file analysis to accelerate debugging, documentation, and analysis—without building an internal UI.

- A standardized reasoning toggle (Instant vs Thinking) for higher-stakes tasks.

Startups and Enterprises

Use DeepSeek when you are optimizing:

- Unit economics for an AI feature at scale (token pricing + inference efficiency).

- Deployment control and non-dependence on the UX and tier structure of one vendor in the long term.

Use ChatGPT when the goal is:

- Quick productivity in all domains (product, marketing, operations, sales, support) supported by tools and a long content retention for documents.

- A managed environment with clear usage policies and guardrails (especially at Business/Pro tiers).

An enterprise IT nuance: if your employees use DeepSeek via hosted services, Reuters reports DeepSeek stores user information on servers in China—something many security teams will want to assess.

If you self-host open models, that risk profile changes substantially.

Content and General Users

Use ChatGPT when you need:

- High-quality writing and tool-based workflows (summarize upload, generate a plan, analyze a spreadsheet, create an image).

- Fast iteration with consistent UX and memory-driven personalization (except for Pro exclusions).

Use DeepSeek when you need:

- A cost-effective engine for content generation at volume, embedded in your own pipeline (where you build checks, templates, and human review).

DeepSeek and ChatGPT in AI Development

For product teams, AI development is rarely “just pick a model.” It’s building a system that can retrieve the right context, follow policies, call tools safely, and produce outputs your app can trust. In a practical DeepSeek vs ChatGPT decision, the real question is whether you’re choosing a model to ship inside your product or a platform to accelerate team workflows.

DeepSeek in AI Development

DeepSeek fits well when you want LLMs as infrastructure. The DeepSeek API models (deepseek-chat and deepseek-reasoner) support 128K context, JSON output, and tool calls, which are key building blocks for agents, RAG pipelines, and structured automation.

This makes DeepSeek a solid option for:

- RAG + assistants that must return consistent JSON for your frontend or workflow engine

- Agent tool routing where the model chooses functions and returns machine-readable results

- Cost-aware systems where token-based pricing and caching can matter at scale

ChatGPT in AI Development

ChatGPT is powerful for development because it compresses the iteration loop. GPT-5.2 is the default model and supports Auto switching (Instant/Thinking) plus built-in tools like web search, data analysis, file analysis, image analysis, Canvas, image generation, Memory, and Custom Instructions.

That changes many AI builds from “model + glue code” to “prototype inside a workspace,” especially for:

- Designing prompts, evaluation sets, and edge-case tests quickly

- Debugging agent behavior with files/logs in-context

- Helping teams standardize workflows via custom GPTs (instructions + knowledge + capabilities)

Use this simple model-selection lens:

If you’re building AI features into a SaaS product, DeepSeek often feels like a flexible component you can architect around. If you’re accelerating product discovery, documentation, QA, and internal workflows, ChatGPT tends to win on speed-to-outcome because the “developer environment” is already packaged.

Final Verdict: DeepSeek vs ChatGPT

A neutral, business-realistic conclusion: DeepSeek vs ChatGPT is best decided by your operating model.

Choose DeepSeek if your priority is control: open weights, MIT licensing, the ability to self-host, and the ability to treat the model as infrastructure you can tune and integrate. DeepSeek‑R1’s open-source release and the MoE efficiency story behind DeepSeek‑V3 are central advantages for engineering-led organizations and cost-sensitive product deployments.

Choose ChatGPT if your priority is time-to-outcome: GPT‑5.2 Auto, explicit Instant/Thinking modes, a model picker for paid tiers, and a complete tool suite (search, data analysis, files, images, Canvas, image generation, memory, custom instructions) that turns “AI quality” into daily workflow acceleration. The hard limits (message caps and tier policies) are real, but they’re also transparent and operationally predictable.

In practice, many SaaS and enterprise teams will use both: DeepSeek as a deployable model layer for product features, and ChatGPT as the day-to-day AI workspace for strategy, analysis, content, and collaboration.

Turn DeepSeek or ChatGPT Into Real Business Automation

Ready to move beyond comparisons and start using AI for real business impact? BrainX Technologies helps you build and integrate AI agents, chatbots, and custom LLM solutions using the right model for your use case, whether that’s DeepSeek, ChatGPT, or a hybrid stack. From MVP to enterprise rollout, we design secure, scalable AI workflows that fit your product and teams.

DeepSeek vs ChatGPT Comparison FAQs

1) How good is ChatGPT vs DeepSeek for studying?

ChatGPT is usually better for studying because it explains concepts in a more conversational way, adapts tone, and helps you practice with examples and quizzes. DeepSeek is great when your studying is math, logic, or coding-heavy and you want structured, step-by-step problem solving.

2) Is there a DeepSeek AI vs ChatGPT comparison chart?

Yes. A simple chart usually compares model focus, reasoning strength, tools, pricing, and best use cases. In this blog, you can use the “Key Differences at a Glance” table as your DeepSeek AI vs ChatGPT comparison chart and expand it with benchmarks if needed.

3) Is DeepSeek better than ChatGPT in math?

DeepSeek often performs very strongly in math and structured reasoning tasks, especially when the prompt is clear and problem-solving is step-based. ChatGPT is also strong, but DeepSeek may feel more consistent on pure math, while ChatGPT can win when explanation quality and context matter.

4) Which AI is better than ChatGPT now?

There isn’t one AI that is universally “better” than ChatGPT for every use case. Some models can outperform ChatGPT on specific tasks like math, coding benchmarks, or cost efficiency. The best choice depends on your goals, tools needed, and how you plan to deploy it.

5) Which AI is better for training industry-specific models?

DeepSeek is usually better if you want to fine-tune and deploy a model for an industry use case with more control over infrastructure and behavior. ChatGPT is better if you want faster rollout using instructions, custom GPTs, and tool-enabled workflows without managing model training.

6) Which AI subscription is worth it: DeepSeek vs ChatGPT?

ChatGPT is usually worth paying for if you want a polished workspace with tools like file analysis, data analysis, and multimodal features. DeepSeek can be more cost-effective if you mainly need API usage at scale or plan to build and optimize your own workflows.

7) Which is more accurate: DeepSeek or ChatGPT?

Accuracy depends on the task. DeepSeek can be very strong for structured logic, math, and technical prompts. ChatGPT is often more accurate for mixed-context questions because it handles nuance, intent, and longer conversational flow more reliably, especially when paired with tools for validation.

8) Is DeepSeek better than ChatGPT for coding?

DeepSeek can be strong for structured coding tasks, logic-heavy problems, and self-hosted workflows. ChatGPT is often better for end-to-end coding help because it explains code clearly and supports tools like file analysis and data analysis in the ChatGPT app.

9) What is the main difference in a DeepSeek vs ChatGPT comparison?

The biggest difference is how they’re used. DeepSeek is often chosen for open-source flexibility and custom deployments. ChatGPT is a full product experience with GPT-5.2, built-in tools, and a polished interface for everyday work.

10) Is DeepSeek free to use compared to ChatGPT?

DeepSeek models can be used through open-source releases or via paid API usage (pricing depends on tokens and model). ChatGPT offers a free tier with limits and paid plans that provide higher usage, advanced tools, and better performance.

11) What should businesses choose in a DeepSeek AI vs ChatGPT comparison?

Choose DeepSeek if you need deployment control, customization, or on-prem hosting. Choose ChatGPT if you want quick adoption, strong productivity features, and integrated tools for teams. Many businesses use both, depending on the workflow.